Simple query optimisation

Query optimisation is one of my favourite things to do because you can get extra performance in response times and because we will use fewer resources in your database.

To optimise queries properly, you have to look at the queries but also know something about your application’s domain. I will take you along in a straightforward one I did today.

So starting from the following query:

EXPLAIN ANALYZE SELECT COUNT(*)

FROM "bookings"

WHERE "bookings"."state" = 'confirmed' AND (ends_at < '2022-05-24');

Aggregate (cost=88226.78..88226.79 rows=1 width=8) (actual time=127.844..127.845 rows=1 loops=1)

-> Bitmap Heap Scan on bookings (cost=12225.25..88146.35 rows=32171 width=0) (actual time=55.488..125.832 rows=39106 loops=1)

Recheck Cond: (((state)::text = 'confirmed'::text) AND (ends_at < '2022-05-24'::date))

Heap Blocks: exact=30135

-> BitmapAnd (cost=12225.25..12225.25 rows=32171 width=0) (actual time=49.448..49.449 rows=0 loops=1)

-> Bitmap Index Scan on index_bookings_on_state (cost=0.00..2482.18 rows=40768 width=0) (actual time=21.448..21.448 rows=41685 loops=1)

Index Cond: ((state)::text = 'confirmed'::text)

-> Bitmap Index Scan on index_bookings_on_ends_at (cost=0.00..9726.74 rows=198709 width=0) (actual time=26.043..26.043 rows=198490 loops=1)

Index Cond: (ends_at < '2022-05-24'::date)

Planning time: 0.616 ms

Execution time: 128.003 ms

We can see from the explain-analyse output that we first do a Bitmap Index Scan on index_bookings_on_ends_at. This means that Postgres scans the index to find the records that match the given where condition Index Cond: (ends_at < '2022-05-24'::date), which led to 198490 rows (rows=198490).

Then a scan is done on Bitmap Index Scan on index_bookings_on_state with the condition ((state)::text = 'confirmed'::text) which led to 41685 rows (rows=41685) .

These things happen because there is an index separately on ends_at and state. This means that PostgreSQL has to figure out which records satisfy both conditions and that information is spread out and needs to be combined again.

Combining, at least as I understand it, happens in the BitmapAnd operation. Then the rows are all fetched from their physical storage and scanned in the Bitmap Heap Scan to see if it is true that they satisfy both conditions, hence the Recheck Cond: (((state)::text = 'confirmed'::text) AND (ends_at < '2022-05-24'::date)).

This sounds like a lot of work. You might have guessed how we can improve this: we have to create a single index for state and ends_at. Let’s see what this does.

We create the index like so:

CREATE INDEX index_ends_at_state ON bookings(ends_at date_ops,state text_ops);

Let’s re-run our query:

Aggregate (cost=5625.65..5625.66 rows=1 width=8) (actual time=35.506..35.506 rows=1 loops=1)

-> Index Only Scan using index_ends_at_state on bookings (cost=0.42..5545.22 rows=32171 width=0) (actual time=0.241..30.923 rows=39106 loops=1)

Index Cond: ((ends_at < '2022-05-24'::date) AND (state = 'confirmed'::text))

Heap Fetches: 0

Planning time: 0.280 ms

Execution time: 35.554 ms

Nice! As expected, this is quicker: from 128.003 ms to 35.554 ms, which is 3.6 times faster or 92ms per call less.

Why care?

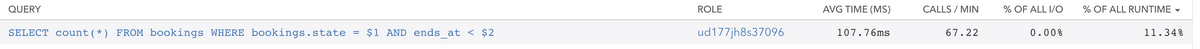

Whether it should be or not, this query is being called ~70 times per minute at the time of writing. This means 70 * 0,92 = 6,44 seconds/minute of savings. Pretty good, but more broadly speaking, we save some resources.

But wait, there is more!

So here is where a bit of domain knowledge can help us further improve the query’s speed.

We have defined our index with ends_at first and then state, which means that Postgres will first collect all the index entries that match the ends_at condition and then traverse its tree to reach the correct state.

As you’ll recall, our ends_at condition resulted in 198709 rows in the separate index scan, whereas the condition on state resulted in 40768 rows.

This means that ends_at limits the rows less than state.

Let’s swap the columns in the index to see if this will help.

DROP INDEX index_ends_at_state;

CREATE INDEX index_state_ends_at ON bookings(state text_ops,ends_at date_ops);

Now the query plan looks like this:

Aggregate (cost=1248.27..1248.28 rows=1 width=8) (actual time=24.197..24.198 rows=1 loops=1)

-> Index Only Scan using index_ends_at_state on bookings (cost=0.42..1167.84 rows=32171 width=0) (actual time=0.085..17.707 rows=39106 loops=1)

Index Cond: ((state = 'confirmed'::text) AND (ends_at < '2022-05-24'::date))

Heap Fetches: 0

Planning time: 0.255 ms

Execution time: 24.242 ms

Firstly you might look at the new execution time: 24 ms, which is quicker but could be a coincidence. It will go from 17-30 ms execution time every time you run this. So it feels faster, but is it?

Let’s have a look at the costs:

- Separate indexes:

Aggregate (cost=88226.78..88226.79 rows=1 width=8) ends_at, state:Aggregate (cost=5625.65..5625.66 rows=1 width=8)state, ends_at:Aggregate (cost=1248.27..1248.28 rows=1 width=8)

We can see that the calculated execution costs went from 88k to 1,2k, and swapping the columns made it 4,3x cheaper to run.

So here you have it, a quick exploration of how using indexes according to what your application runs them and a bit of domain knowledge can help you squeeze out some extra speed and resources.